|

It demonstrated two different approaches to a key piece of the puzzle: calculating the total distance the two missiles travel in one minute. Without using pencil and paper, calculate how far apart they are one minute before they collide.”ĬhatGPT made a solid effort on this one. How about something with more math? In Gardner’s words from his August 1958 column, “Two missiles speed directly toward each other, one at 9,000 miles per hour and the other at 21,000 miles per hour. But I actually think that all the instances in which it gets logic right-it’s just proof that there was a lot of that logic out there in the training data,” Toyama says. “When it fails, it looks like it’s a spectacularly weird failure. That’s exactly the same reasoning we suggested in 2014.īut ChatGPT’s easy victory in this case may just mean it already knew the answer-not necessarily that it knew how to determine that answer on its own, according to Kentaro Toyama, a computer scientist at the University of Michigan. If the lightbulb is off and cold, the third switch works. If the lightbulb is off but warm, the first switch works. If the lightbulb is on, the second switch works. When I fed this into the AI, it immediately suggested turning the first switch on for a while, then turning it off, turning the second switch on and going upstairs. Without leaving the third floor, can you figure out which switch is genuine? You get only one try.” Then go to the third floor to check the bulb. Put the switches in any on/off order you like.

The other two switches are not connected to anything. Only one operates a single lightbulb on the third floor. As described in the 2014 tribute, “ There are three on/off switches on the ground floor of a building. Puzzle 1įirst, let’s explore a true logic problem. Here’s how some relatively simple puzzles can illustrate this crucial difference between the ways silicon and gray matter process information. “It might sound like it is reasoning however, it is bound by its data set.” The AI “does not have reasoning capabilities it does not understand context it doesn’t have anything that is independent of what is already built into its system,” says Merve Hickok, a data science ethicist at the University of Michigan. After that humans train the system, teaching it what types of responses are best to various kinds of questions users might ask-particularly regarding sensitive topics. Then ChatGPT learns to statistically identify what word is most likely to follow a previous word in order to construct a response. This is a deep-learning system that has been fed a huge amount of text-whatever books, websites and other material the AI’s creators can get their hands on. The results ranged from satisfactory to downright embarrassing-but in a way that offers valuable insight into how ChatGPT and similar artificial intelligence systems work.ĬhatGPT, which was created by the company OpenAI, is built on what’s called a large language model.

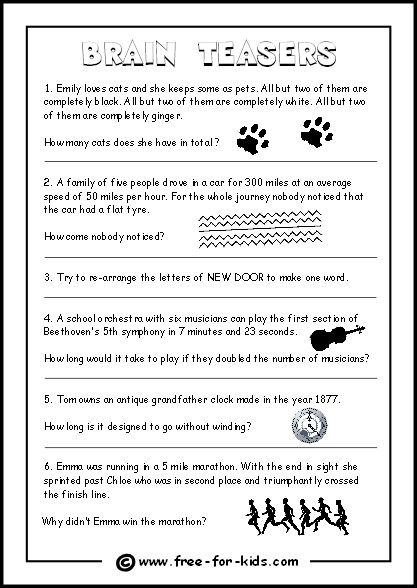

I tested ChatGPT on a handful of text-based brainteasers described by Gardner or a 2014 tribute to his work by mathematician Colm Mulcahy and computer scientist Dana Richards in Scientific American. But Scientific American wanted to see what would happen if the bot went head to head with the legacy of legendary puzzle maker Martin Gardner, longtime author of our Mathematical Games column, who passed away in 2010.

It turns out that if you want to solve a brainteaser, it helps to have a brain.ĬhatGPT and other artificial intelligence systems are earning accolades for feats that include diagnosing medical conditions, acing an IQ test and summarizing scientific papers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed